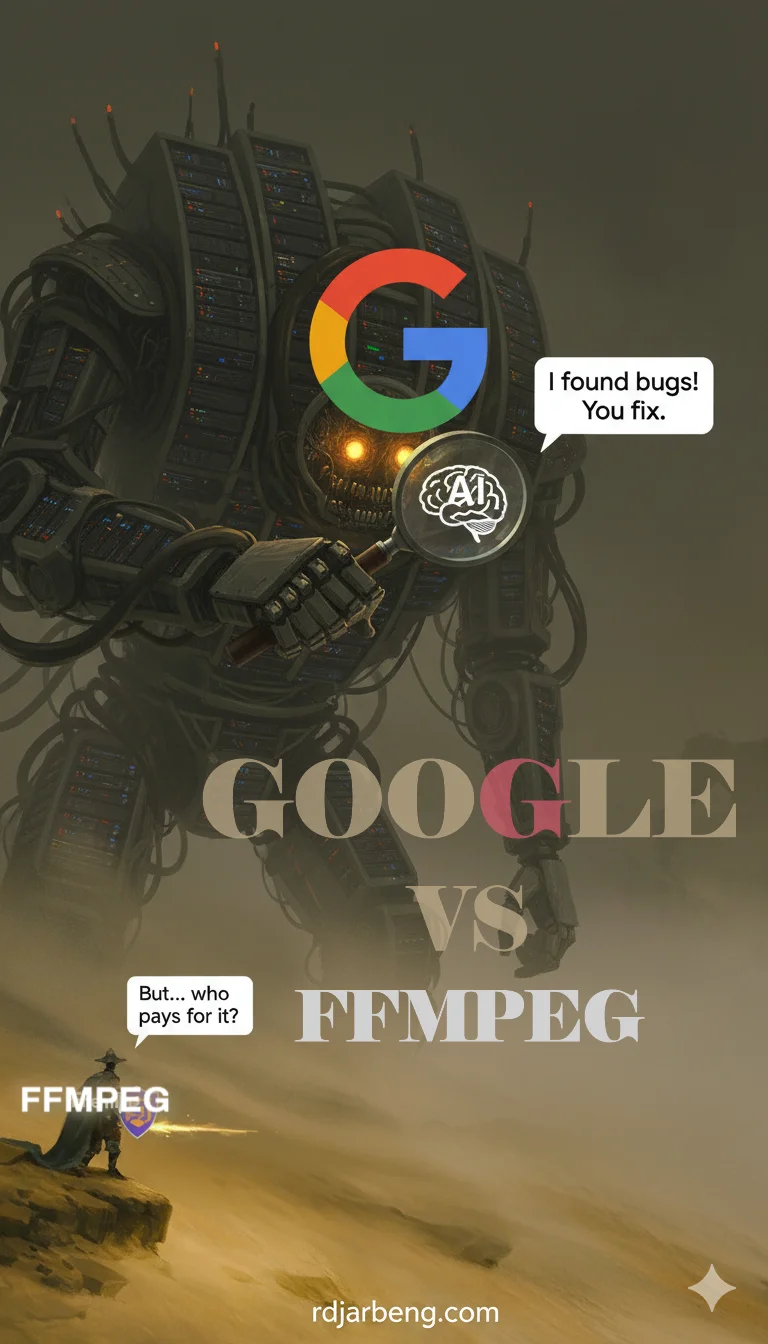

The Google vs. FFmpeg Debate: AI Finds a Bug, But Who Has to Fix It?

In the vast world of software, a recent conflict has flared up between Google’s elite security team and the volunteer maintainers of FFmpeg, a project that powers countless apps you use every day. This isn’t just a technical squabble; it’s a story about AI, corporate responsibility, and the foundations of the open-source world.

What happens when a trillion-dollar company uses advanced AI to find flaws in software run by volunteers? And who is responsible for fixing the mess? Let’s break down what happened.

What is FFmpeg, and Why Does It Matter?

First, some quick context. FFmpeg is a free, open-source software library that is the backbone of digital media.

Think of it as a universal translator for video and audio. If you’ve ever:

- Watched a video on YouTube or TikTok

- Used a media player like VLC

- Streamed a video in the Chrome browser

…you have used FFmpeg. It’s the essential, invisible plumbing that makes digital media work. Google itself has relied on FFmpeg for over a decade to power core parts of YouTube and Android. You can learn more at the official FFmpeg documentation.

The Open-Source “Bargain”

This brings us to the core tension. FFmpeg is “open-source,” meaning its code is public and built by a community. Like many critical open-source projects, it’s maintained primarily by unpaid volunteers who work on it in their spare time.

This creates a paradox:

- For companies like Google, FFmpeg is invaluable, free infrastructure.

- For the maintainers, it’s a passion project that happens to be used by billions of people.

This model works, until it doesn’t. Past crises like the 2014 Heartbleed bug (which broke security across the internet) showed how thin the volunteer-run foundation can be. This new dispute brings that tension back into the spotlight.

The Spark: Google’s AI Finds a “Big Sleep” of Bugs

The dispute ignited in July 2025, when Google’s Project Zero (their team of elite bug hunters) announced a new “Reporting Transparency” policy. In short, they would publicly announce the existence of a bug just one week after finding it, though they’d keep the technical details private for 90 days to give maintainers time to create a fix.

Then, in August 2025, Google unveiled “Big Sleep,” an AI tool that autonomously hunts for vulnerabilities. Big Sleep immediately found about 20 issues across several projects, including FFmpeg.

What’s the Big Deal About These Bugs?

The AI found serious flaws, like “buffer overflows” and “use-after-free” errors.

For the non-tech reader: These are not minor typos. They are the kinds of bugs that, in the worst case, could allow an attacker to crash an application or even run malicious code on someone’s computer.

Google’s AI had, in effect, pointed out several security risks in this critical piece of internet infrastructure. They reported them, as detailed on Google’s issue tracker, and the 90-day clock started ticking.

The Debate: Two Sides of the Story

This is where the conflict exploded.

➡️ Google’s Perspective: “We’re Helping Secure the Internet”

Google frames its actions as responsible disclosure. Their argument, laid out in a Project Zero blog post, is:

- Transparency is good. Publicly disclosing (but not detailing) bugs motivates everyone to patch faster, making users safer.

- Bugs are bugs. These vulnerabilities exist whether an AI finds them or a malicious hacker does. It’s better that Google finds them first.

- They contribute. Google offers a Patch Rewards program (up to $15,000 for FFmpeg) and has provided bug-finding tools like OSS-Fuzz to the project for free.

⬅️ FFmpeg’s Rebuttal: “You Broke It, You Buy It”

The volunteer maintainers of FFmpeg had a sharply different view. In their main X post on the subject, they laid out their core argument:

“We take security very seriously but at the same time is it really fair that trillion dollar corporations run AI to find security issues on people’s hobby code? Then expect volunteers to fix.”

This kicked off a firestorm. As the FFmpeg account on X faced heated comments, it repeatedly hammered home the point that the project is maintained by volunteers, not a paid staff.

This sentiment, that Google should provide fixes (patches) and not just reports, became the rallying cry for their side of the argument.

📣 The Community Weighs In

The tech community was instantly divided.

- Security expert Katie Moussouris argued Google should go one step further and use its AI tools to propose fixes, not just find problems (post).

- Others, like Dino Dai Zovi, noted that Google’s bug reports didn’t even mention their own bounty program, which felt like a missed opportunity.

It looks like there is a $15k bounty out for an accepted PR that fixes the vulnerability identified by Big Sleep in @FFmpeg:https://t.co/C3v0sikr26

— Dino A. Dai Zovi (@dinodaizovi) November 5, 2025

I certainly didn't remember that this program existed, would be a different vibe to mention it in the bug report sent to project… https://t.co/UetMYTg0xj pic.twitter.com/YTwnUa1QWP

Side note: If you want more information about bug bounties and the rewards involved from finding bugs for companies, this post should give you a good start. What on earth is a bug bounty?

- Some critics called it a “perverse incentive” (post).

- Broader takes, like this one on PiunikaWeb, framed it as corporations “privatizing the gains while socializing the risks” of open source.

Status as of November 11, 2025: Patches Land, Tensions Linger

So, what happened? The good news is that the immediate danger is over. By November 8, all the vulnerabilities Google’s AI found had been patched by the FFmpeg team and others.

The bad news? The philosophical fight is far from over.

There has been no formal resolution. Google’s policy trial continues, and the open-source community remains divided. The relationship between the world’s biggest companies and the volunteers they depend on is as fragile as ever.

Final Thoughts: Who Owes What to Whom?

This clash between Google and FFmpeg reveals the fragile heart of our modern digital world. AI is getting incredibly good at finding problems, but we haven’t figured out who is responsible for solving them.

It leaves us with critical questions for the future of software:

- Is finding a bug a “gift” to a project, or is it an unfunded mandate?

- Do corporations that profit from open-source owe volunteers their time, their money, or just a “thank you”?

- As AI tools get even more powerful, will they “shift-left” security and make us all safer, or will they simply burn out the human volunteers who keep the internet running?

What do you think?

Ending this post with a message from FFmpeg on X/Twitter:

We would like to thank everyone who sent messages of support to FFmpeg this week!

— FFmpeg (@FFmpeg) November 7, 2025

Sources: Insights drawn from Google Project Zero announcements, FFmpeg communications, and community discussions as of November 8, 2025. Images in this post generated by Google Gemini AI (nano-banana)

If you’re a developer, consider contributing to FFmpeg’s tracker or supporting them via sponsors.

The cover image from this post is inspired by this picture of a Scary robot concept Art.